Every so often, amidst the hundreds of science and engineering talks I hear every year, there's one new technology that seems straight out of Star Trek. Here, I report on one — a new high-density, multi-electrode array (HD-MEA) that was just presented at Stanford in 2016 by Professor Andreas Hierlemann, who leads the group that developed it at the ETH: Zurich (Basel campus) in Switzerland. (Prof. Hierlemann was hosted and introduced by Stanford's Professor EJ Chichilnisky, who is collaborating with him to study the physiology of the retina.)

Most of the MEA work I hear involves arrays containing a few hundred electrodes used for recording intracranially from the human cerebral cortex — electrocorticography (ECoG.) Other commonly presented work involves the use of the hundred electrode Utah Array, implanted in the motor cortex of human participants, to drive brain machine interfaces.

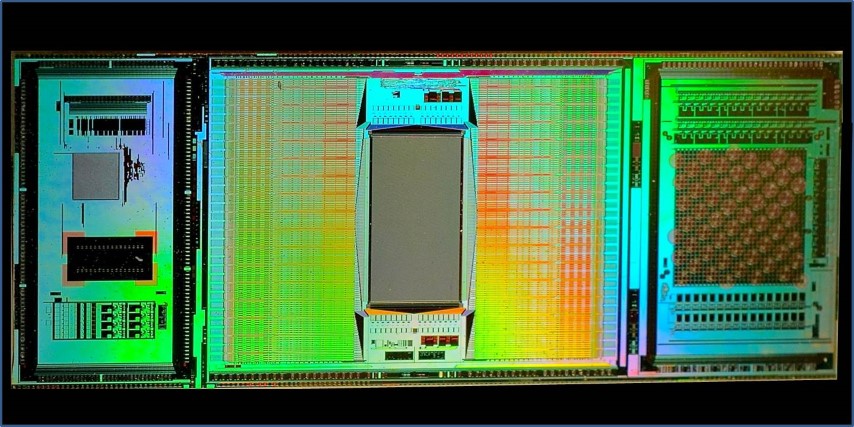

In contrast to those arrays, the new (3rd generation) ETH Array — that's my name for it — contains about 60,000 electrodes (5,000 trodes per mm2) all contained in a 3 mm by 4 mm rectangular portion of a CMOS integrated chip that's about one cm long. The rest of the chip contains eight other modules used for amplification (the signal intensities are microvolts,) noise filtering and artifact suppression, multiplexing, analog-to-digital conversion, and electrode selection. The electrodes may be turned on and off in real-time (within microseconds) during a single experiment to focus data collection on where the action is. In a single experiment the chip can kick out a terabyte of data — faster than any lab's internet can transmit to a server cloud for processing. So, real-time, local data reduction, both on chip, and by in-lab computers, is essential.

Each electrode in the 60,000 strong array is currently just 8 microns on a side, and work is underway on trodes that are just one micron in diameter.

But, recording action potentials is just part of what the ETH Array does. It can also stimulate neurons anywhere in the array (at 1.5 volts) while simultaneously recording the microvolt external fluctuations as a spike cruises nearby a trode. (That's like listening to a whisper amidst a screaming football crowd — it requires fancy artifact suppression.)

As if recording or stimulating spikes wasn't enough, the chip can also detect highly localized neurotransmitter release (via cyclic voltammetry).

The neural networks that the chip is designed to interact with are either laid as a slice onto the electrode array or cultured directly onto a chamber such that the electrodes are in direct contact with the cultured neurons. Since the trode array lies beneath the neurons, the top surface of the neurons may be simultaneously interrogated using other means, eg using calcium imaging or other immunofluorescent methods.

Professor Hierlemann showed us several videos of the chip in action. First, one of several dozen cultured neurons lying on the array is stimulated at its AIS (its axon initial segment.) We then see a spike traveling down that axon and into all its branches and then into neurons onto which they synapse. You can also see antidromic (reverse) propagation going up into dendrites. )

One of many exciting uses of the array is to decipher the immensely complex functioning of the primate retina, as is being done by Stanford's Prof. EJ Chichilnisky, whose lab is using the ETH Array for exactly that purpose. As EJ explained to me, most of the primate retina is silent, ie, its neurons don't spike (but rather use continuous, graded potentials), which enormously complicates understanding what's going on.

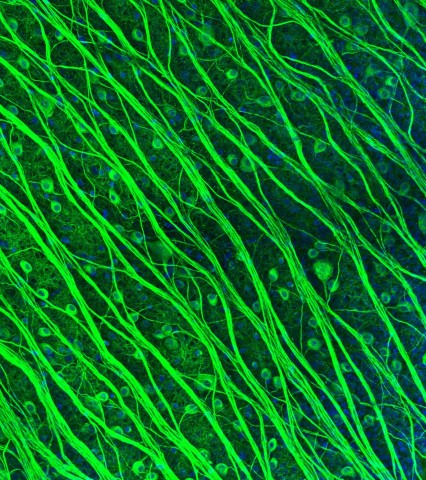

Photomicrograph of retinal ganglion cells (RGCs) by Lauren Grosberg in the Chichilnisky Lab. (In 2016 Lauren won 2nd prize in the Stanford Art of Neuroscience competition.)

Fortunately, the RGCs (the retinal ganglion cells, which form the optic nerve — the output cable of each eye) do spike, since they need to communicate all the way to the lateral geniculate (in the thalamus) and thence to the visual cortex. Vertebrate brains always use spiking neurons to do long distance communication. Going digital greatly increases fidelity (as it does in cable.)

With each RGC nestling directly against an electrode, there's finally the hope of recording every spike from every output neuron in the retina — one of the holy grails of the Obama Brain Initiative.

Contemplating this work afterwards, a big light turned on for me. The ETH Array is performing analogously to the retina whose function it's recording.

The retina is also in the signal conditioning business big-time. Much of its non-spiking activity is devoted to light intensity adaptation, artifact suppression, contrast enhancement, multiplexing, and finally analog-to-digital conversion for the long haul to the brain — technology recapitulates biology.

Swiss mountaineers have a custom of taking a swig at the summit of a mountain: a gipfelschaps. The ETH Array is just such a dazzling summit.

By the way, Professor Hierlemann's Bio-Electronics Lab welcomes neuroscience collaborators, but you must provide a full-time researcher at your lab dedicated to this collaboration.

PS: I asked whether they're part of the European Human Brain Project (HBP. ) They're not. Following the big revolt by the European neuroscience community (in 2015) the HBP is now devoted mainly to brain simulation.

High-resolution CMOS MEA (Muller, Ballini, et al.) Lab on a Chip 2015