Leave the driving to us!

I was preparing an essay on what I call BIG (or planetary) machine consciousness when it occurred to me that many of the attendant issues are already raised by the following question:

The answer to that question (which is no!) will help us expose what consciousness is, how it works, and what it will take to make a car (or other robot) conscious. Here are some of the questions I'll try to answer:

Now - the details:

The answer is NO! I weigh in on this question because the internet is rife with misleading answers. (But, that's not a "no" forever; that's a "no" at the moment.)

The crucial and defining property of csns (consciousness) (in humans and other higher animals, eg your dog) is in having a subjective world composed of a collection and succession of subjective states, eg the appearance of your office, your garden, your friend's face, as well as concurrent bodily sensations (your legs, your head, your internal state) and a succession of emotions: elation, pride, cold, pain, jealousy, anxiety, etc. No machine has these or any other subjective states (yet.)

The fundamental enabler is having a giant parallel-processing brain. But, it's not simply about having 100 billion neurons nor about having a 100 trillion synapses. It's all the simultaneous processing in the brain, which I'll describe. Having that giant brain is an essential condition, but it's not sufficient. The brain must be doing a very particular type of processing that I will (speculatively) describe in general terms.

Scholarpedia contains a recent overview of mechanisms and properties of consciousness by research pioneer Bernard Baars.

This is the heart of this essay!!! Here I follow Bernard Baars' GWS (global workspace) model as a simple to understand, explanatory framework. Conscious processing involves the creation of a GWS that is made up of a dynamic, transparent model of yourself and your surroundings. That model most prominently includes one's internal states (hunger, awareness, comfort, eg, simultaneously with a model of one's own body that arises from an integration of vision, touch, and proprioception . (Note that none of this, so far, has much to do with intelligence or problem solving.) That self model is seamlessly integrated prominently with a visual model of the outside world as seen from a single point behind the eyes.

My favorite analogy is the executive summary that President Ronald Reagan wanted from his cabinet to summarize the world's state of affairs. Using this, he made his decisions. (Trump functions in the same way - using a very stripped down, highly edited version of reality). Yes, it's funny, but all of us do it all the time. Otherwise, reality would be overwhelming.

This notion of an edited, but still large model of reality is an essential concept. Here, German philosopher Thomas Metzinger explains it. When Metzinger uses the word "transparent" to describe the model of the world that your brain creates, he actually means invisible to you. The fact that our model of the world (including of our own body) is invisible as a model is why children and most adults mistake what they themselves perceive as being actual reality. It is NOT! That simplistic view is called naive realism.What you actually perceive is what I sometimes call a "cartoon world."

The fact that your reality is a stripped down, highly edited cartoon world does not mean that it's necessarily misleading or not useful. It's absolutely useful! Your continued existence depends on it. But, if it wasn't a highly filtered, greatly reduced version of the actual world around you, you would be paralyzed with indecision - unable to act.

The world as it actually exists (in your body and outside) is a seething mass of quantum electrodynamics: incomprehensibly detailed and therefore unusable. For example, are you actually perceiving each of the thirty trillion cells in your body? Absolutely not! At best you get a highly reduced feeling of continued existence occasionally augmented by a screaming body part (like a fractured tooth) that is damaged. That screaming body part demands top priority action. That's good. (In contrast, lethal health problems like cancer and atherosclerosis are typically totally silent.)

Your brain usually (but not invariably) knows exactly what's useful to your on-going survival. Evolution has made sure of that. Most parts or functions of the brain that don't directly contribute to survival were eliminated eons ago.

Consciousness displays the relevant part of what's out there. Can I eat it? Will it eat me? Can I mate with it? Or, is it dangerous (or both)? How am I doing? What actions do I need to take now to maintain my well being or to make things better?

Your body's survival agenda determines what gets written to the blackboard of csns.

Professor Anil Seth (University of Sussex) is another star researcher of csns. (By the way, the majority of csns researchers are in Europe; American researchers are still living under the curse of the notorious behaviorists like John Watson and BF Skinner.) In this recent lecture presented to the Royal Institution (Cambridge), Prof. Seth speculates on the reason that csns exits. The integrated, fused conscious field of subjective reality provides a (Bayesian) predictive model that organizes and biases our entire incoming sensory world.

That is, what we see and feel, is only half (or less) about our incoming sensory data. The majority of that organizing data comes from what is stored by our brains from prior experience and that, when combined with on-going sensory input, gives rise to our perceived world.

Is this biasing of what we see by what we know, a drawback or an advantage? Professor Seth shows that most often it's an advantage. Our stored world model enables us to make sense of a world full of ambiguity and sparse data (and he includes several amusing examples.) Our biased, conscious view emphasizes the parts of the world that we must attend to and act upon.

(During the 2016 American presidential election, a friend expressed her concern about the confirmation bias that swayed the results. Yes (I asserted loudly!) But, it's ubiquitous and, unfortunately, inescapable. Look at the Adelson checkerboard illusion here . You can't get rid of it!)

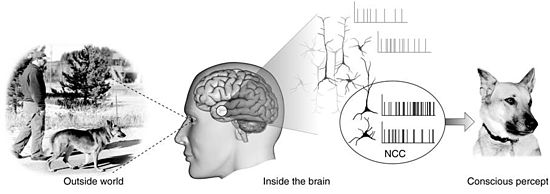

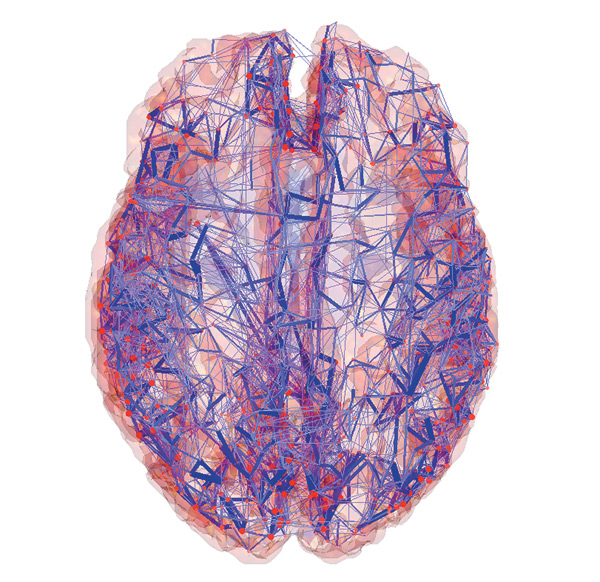

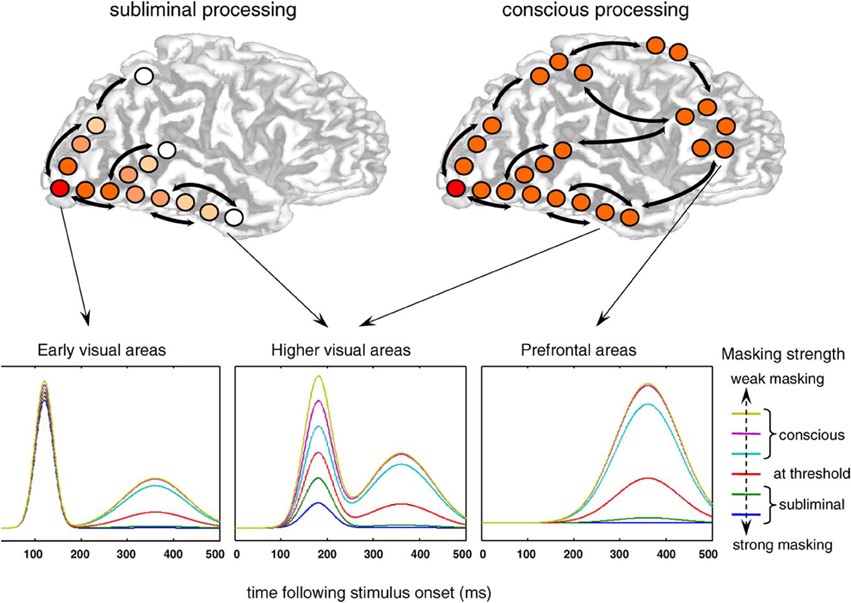

This is an active area of neuroscience, but the simplest answer is that many brain regions must be simultaneously engaged and communicating with one another to create a conscious percept (as opposed to an unconscious response.) Conscious processing involves broad interacting areas of the cortex and it's slow (hundreds of milliseconds.) (A single neural spike arises in only one millisecond.)

The signatures of conscious processing may be seen on EEG or on ECoG as activation of particular, high-frequency (gamma) bands that are the hallmarks of REM (dreaming) sleep as well as conscious waking attention. A superb, popular review of this work is in Professor Stanislaus Dehaene's 2014 book, Consciousness and the Brain.

The memorable phrase that csns pioneer (and Nobel laureate) Francis Crick and his collaborator Christof Koch used to describe brain mechanisms of csns is that the front of the brain (the frontal cortex) must be having a two way conversation with the back of the brain (parietal, temporal, and occipital lobes.) That huge swath of cerebral real estate draws in a lifetime of relevant memories through which incoming sense data is filtered and shaped. The results are your subjective world (Cartoon World.)

Consciousness is essential and a huge help with survival. Besides its role in filtering and simplifying incoming sensory data, it also provides a continuously updated working memory that we use to mull over partial results when solving novel, complex problems or when deciding on a course of action. You can multiple 5 X 7 reflexively but not 17 X 23. (You use conscious, working memory to store intermediate results.) Similarly, csns may help you decide whether to splurge on an impulse buy or save for the future.

No! (but it's moving in that direction and superficially looks tantalizingly close.)

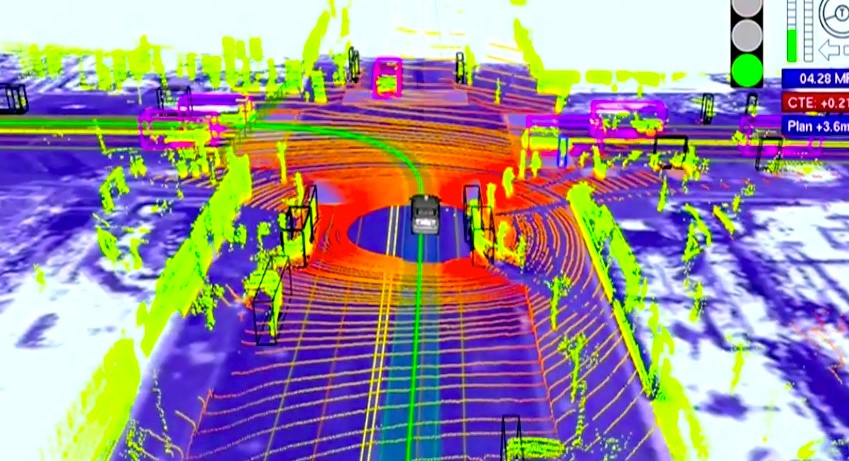

Here's an excellent description of what the car computes from moment to moment as it drives down the highway by its leading (former) architect, Chris Urmson. (This is a must-see video, if you want to know how driverless cars work. Chris has since left Google and is now running his own driverless car company (Aurora Innovation).) Take a look at the models computed by the Google car of the other cars and cyclists surrounding it as it drives along. Isn't that also a filtered, reduced, cartoon world of the essential aspects of the universe surrounding the car? Yes! (That's why I'm writing this essay.)

That model, unlike the computations in IBM's Watson or DeepMind's AlphaGo, is definitely a step toward machine csns. So, what's missing?

There are several aspects to being conscious (this is Anil Seth's list)- of being someone:

Basically, what's missing is the "I" or "me" of the car itself. As yet, it has no subjective states. Let's go down the list.

Bodily self. The Google car is not experiencing its body. It seems that that should be easy to engineer but I don't know how. Yes, it's got proprioceptive sensors galore (eg GPS and a precise odometer that measures its exact centimeters traveled based on tire circumference) but that's not being written to a body model that the car interprets as its experience.

Perspectival self. This is the most seductive piece of "the driverless car as conscious agent" argument. The car not only has a detailed model of large objects surrounding it, but it can arbitrarily alter its geometric perspective, just as the CGI virtual cameras do in big budget action movies. You (the movie goer or video game player) can potentially see the world from above or from any side.

There is, however, with the driverless car, no agent that is doing the seeing. But, you might reply, there is really no "little man inside your head" that is doing the seeing in human beings. Csns is an illusion. I agree with that, but I'm just not sure how to re-create that illusion in the car. It seems it should be possible.

Note: this is not a linguistic issue. The fact that the car or a small child or dog can or cannot talk has little to do with it.

Volitional self. A key enabler of the illusion of csns is the apparent locus of self. It's the fact that there's a conscious self centered on the body and a perceived locus of control (I will my hand to move; then, I perceive it moving.) Or, I move my hand towards an object, and then I feel that object touching my hand exactly at the moment when my vision tells me they're in contact.

That simultaneity of sensations is what helps create the illusion of a conscious world with "me" situated inside it. It seems it should be possible to re-create that illusion in the car (with some resulting advantage.)

Social self. Surprisingly, this is an aspect of the car that the Google engineers have been forced to address.

It arises most obviously when a car arrives simultaneously at a 4-way intersection with other cars. How do you signal other drivers that you are going ahead?

Human drivers usually (but not invariably) do this by following the law - the car to your right has the right-a-way. But most drivers are more pragmatic. When they feel it's their turn to go, they inch out to let the other drivers know they will soon commit to going ahead. That dance is a social act that the other cars need to sense, and you (the driverless car) must also sense their response etc. It's not easy engineering, but it's already in Google's cars.

A: That may be accurate. That's why I bothered with this essay. And, that's why I estimate the creation of machine cnsc within several decades. I believe the key piece that remains is to embed the visual models that the car computes from its sensors into a visual/proprioceptive model of the car itself. (And a model of itself as a locus of control; that is, that, eg, it's the "I" (the car, itself) that ordered the turning of the steering wheel, based on it's having sensed the road veering to the left.

They are overlapping spheres of competence, and the human brain remarkably pulls off both. As humans, to ourselves, our most remarkable feature is our intelligence. After all, non-human primates, and other mammals seem to have similarly detailed consciousness.

I thought that driverless cars would help to expose this contrast, but actually other applications of AI, especially expert consultants or personal assistants, or robots are better platforms for that discussion.

All the considerable intelligence of driverless cars is funneled into just three actions: how much to press on the "gas pedal," how much to press on the brakes, and where to direct the "steering wheel." Contrast that, eg, with the intelligence that was required in the 2015 DARPA Robotics Challenge. Those legged robots had to climb over piles up debris, open a door, go up a ladder, close a valve, and install a pump. No robot accomplished everything. The planning and motor skills involved are immensely difficult (even though an 8 year old child could easily accomplish any of this.)

Or, how about the intelligence involved in doing Nobel prize winning research or engineering. That level of intelligence is far beyond what can be envisaged for current machines.

Invention involves seeing in the "mind's eye" simulations of hypothetical scenarios and planned actions. It also involves careful continuous deliberation about resource allocation (time, effort, and materials.)

With that kind of highly advanced intelligence there is no question of being able to do it without consciousness (at least in humans.) Subconscious processing (eg, celebrated tales of key insights occuring in dreams) may contribute to big discoveries but creative invention is highly effortful, involving heavy use of working memory, and conscious effort.

Despite that, once a complex skill is learned, it may be non-consciously re-enacted, as everyone from concert pianists, bicycle riders, and drivers realize. The difficulty (and conscious effort) is required when learning the skill, not necessarily in its execution, once it's mastered.

The bottom line is that consciousness may be implemented on machines well before advanced machine intelligence is realized. My prediction is that the Turing test will be cracked before 2025; the Feigenbaum test (displaying Nobel level intelligence in three separate domains) may be 50 or more years away.

There are several potential advantages of cars that are conscious. And, here I'm not talking about a car with the full suite of human qualia and emotions (that's far into the future), but simply one with enough of the half dozen properties of cnsc discussed above (bodily self, etc.) that most engineers and neuroscientists would need to concede that it's conscious in many respects (as they do now with mice and birds.)

Here are some advantages: facilitated learning of correct responses in new situations. Most of the actions the cars now take need to be carefully programmed by hand. (Chris Urmson shows the car encountering a woman in a wheel chair trying to shoo a duck off the road.) A conscious car might be able to learn what to expect and how to respond to that situation without being programmed.

Another advantage might be an integration of (the car's) bodily assessment and road conditions with the goals of the driver and the trip. This is a fun one that gets at the survival advantages of csns. How important is this particular trip to you (the driver?) Is it important enough to ignore snow on the windshield, impossible anticipated road congestion and accidents, and flakey brakes?

Accurate assessments in the face of noisy or missing data. Humans (for better or worse) use their csns to assist in the interpretation of noisy, distorted data. When the road is obscured by rain or fog or by occluding objects, humans can fill in the data (just as we constantly do with the anatomic blind spots in both our retinas.) This might increase the performance of driverless cars. Or it may be just the opposite.

Disadvantages of conscious cars. Filling in noisy or missing data may obviously lead to accidents, just as it does with human drivers. This is clearly a trade-off.

Perhaps a conscious car would try to intervene too much. I'm conjuring up the image of Marvin, the paranoid robot from Hitchhiker's Guide to the Galaxy, who would probably be bored out of his mind and constantly whining about his plight as your chauffeur. (Star War's C3PO is another whining robot that treads heavily on its owner's patience.)

A: Linguistic competence is a crucial part of human intelligence but it's not essential for csns. For example, the family dog doesn't have it, nor does the family's 1 year old baby.

For liability reasons, an argument is being voiced to have driverless car use language to justify their actions. It's easy to imagine a natural language interface that would enable the car to tell you "Please, take the wheel. The rain is obscuring my vision and there's too much traffic."

A: I'll let you read about the trolley problem. But for now, here's an informative example. You're barreling down the highway and suddenly there's a school bus full of kids directly in front of you. You (the driverless car) can either kill them or pilot the car off the side of the bridge resulting in the certain death of your one and only passenger. You (the car) have only half a second to decide.

Philosophers and ethicists expound endlessly on this scenario. But, one crucial fact is that we human drivers are more hopeless at this task (when the milliseconds are flying by) than a petabyte-crunching driverless car that has continually rehearsed thousands of these scenarios.

Whether car buyers will buy a driverless car that sacrifices its owner when the going gets tough is another question. But that's irrelevant to this discussion.

What's relevant here is the way a life-time of social experience and a moral framework individually informs our actions. If a split-second decision needs to be made, I personally would rather leave it up to the machine. For very difficult trade-offs, eg governmental investment in nuclear power plants, those decisions will not be relegated solely to machines for several decades (if ever.) (But even here, I'd really like to have big server farms assisting the experts with these complex decisions.)

A: My view is that conscious machines (or at least highly intelligent, simulated conscious ones) are inevitable and can't be stopped even by concerted legislation. Nor should they be. The benefits of these machines are just too great. While driverless cars will occasionally make fatal errors, the US death toll from car accidents (35,000 per year) will plummet.

Discussions by economists of resulting unemployment are ubiquitous (and voice legitimate concerns.) The character and utility function of robots (what they value) will, in the next few decades be dictated by their human designers. Consumers will mainly buy friendly robots (and cars)... the military is a different story (and here my main worries are with totalitarian regimes like North Korea (Trump not withstanding.))

Demotion of human status: yes, but with important provisos. The population of horses in the US peaked in 1914 and has dwindled ever since. People still enjoy owning horses, but they're not essential, as they were in 1914. I believe the same thing will happen with humans. (And the sooner, the better; we're making a mess of planet Earth.) While we may find ourselves demoted, it will happen slowly. The key reason is that human neural computation is massively efficient. All it takes is 20 watts (about 20 calories per hour) to keep your brain going. It's going to be decades before Intel or Nvidia can duplicate that.

It should be plain that I'm a driverless car enthusiast, and when they're designed to be conscious, so much the better. Bring 'em on!

published July 2017

(Substantive comments may be emailed to bob AT bobblum DOT com. With your permission excerpts may be posted here.)